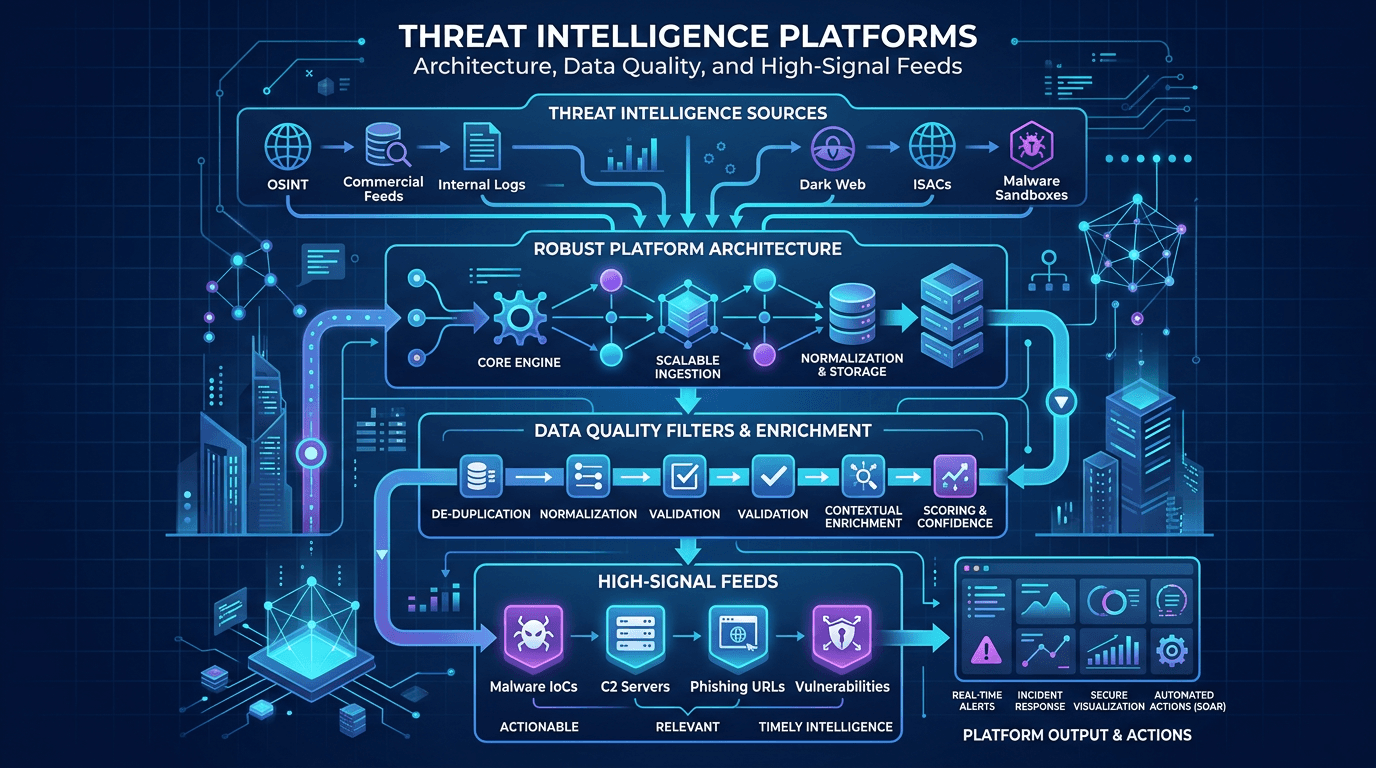

Threat Intelligence Platforms: Architecture, Data Quality, and High-Signal Feeds

Design TIPs and intel pipelines that scale: normalization, confidence scoring, deduplication, API-first delivery, and how to pair platform investments with analyst workflows.

Threat intelligence platforms (TIPs) promise a single pane of glass for IOCs, actor reports, and dissemination workflows. Reality is messier: without disciplined data quality, a TIP becomes an expensive duplicate repository—while analysts still pivot across spreadsheets. This article covers reference architecture patterns, normalization strategies, and governance practices that keep threat intelligence actionable. It also explains how specialized reputation APIs for IPs and domains—such as isMalicious—slot alongside a TIP rather than replacing it.

Reference Architecture: Ingest, Normalize, Enrich, Act

A robust pipeline typically includes:

- Ingestion — feeds (STIX/TAXII, CSV, JSON APIs), email newsletters (manual), MISP sync, partner portals.

- Normalization — map vendor-specific fields to canonical objects; lowercase domains, canonicalize IPs, validate SHA-256 formatting.

- Deduplication — merge duplicate IOCs with union of sources and highest confidence metadata.

- Enrichment — geolocation, ASN, passive DNS, WHOIS age, CVE references, MITRE technique tags.

- Disposition — tagging for blocking tiers, hunt queues, or informational only.

- Distribution — SOAR playbooks, EDR blocklists, firewall policies, ticketing.

Each stage should emit metrics: ingestion lag, dedupe ratio, enrichment failures, distribution success rates.

Data Models: STIX 2.x as Lingua Franca

STIX objects express indicators, malware, campaigns, and relationships. Even if your TIP vendor abstracts STIX, understanding object graphs helps analysts reason about campaign-level context versus flat IOC lists.

Invest in training: teams that treat STIX purely as opaque JSON miss relationship queries that accelerate investigations.

Confidence and Provenance

Every indicator should carry:

- Source identity (vendor, internal research, partner share).

- Confidence (numeric or categorical).

- Timestamp (first seen, last seen).

- TLP or handling markings.

Without provenance, analysts cannot justify blocking decisions to auditors—or unwind mistakes quickly.

Deduplication Strategies

Duplicates arise from multiple feeds reporting the same phishing domain. Keys might include indicator type + value + threat type. Collapse duplicates but preserve multi-source lineage—knowing three independent sources reported an IP strengthens actionability.

API-First Integration for Modern Stacks

Batch uploads are insufficient for high-velocity environments. Prefer streaming APIs or message queues for near-real-time updates. Rate limits and backoff policies should be explicit in runbooks—SOC automation fails loudly when APIs throttle silently.

For SEO, buyers compare “TIP API latency” and “bulk enrichment limits” during trials; vendors publishing transparent limits reduce evaluation friction.

Pairing TIPs with Specialized Reputation Services

General-purpose TIPs excel at workflow and correlation; specialized services excel at continuously updated IP/domain risk scoring tuned for blocking decisions. Integrate isMalicious-style APIs at alert enrichment time so analysts see consolidated reputation without leaving their case management tool.

Avoid double-paying for identical datasets—periodically compare feed overlap.

Data Quality Tests and Synthetic IOCs

Run automated tests that ingest known-good and known-bad samples to verify parsing pipelines after upgrades. Synthetic IOCs (internally generated, clearly labeled) validate distribution paths without risking production blocks—use safe testing tenants.

Human-in-the-Loop Curation

Automation cannot remove all judgment. Maintain analyst shifts to review medium-confidence feeds, merge false positives, and annotate actor context. Curation is a product; budget headcount accordingly.

Storage, Retention, and Cost Management

Raw intelligence accumulates fast. Tier storage: hot indexes for recent data; cold archives for compliance. Define retention aligned with legal requirements—some jurisdictions limit how long you may store personal data derived from logs even if indicators are technical.

Search and Investigation UX

Analysts need fast search across IOC values, actor names, and campaign tags. Poor search UX drives exports to spreadsheets—defeating platform investments. Demand sub-second queries for common lookups at RFP stage.

Measuring Platform ROI

Meaningful KPIs:

- Reduction in manual copy/paste between tools (time studies).

- Faster MTTR for incidents enriched via TIP.

- Percentage of alerts auto-closed due to high-confidence context.

- Analyst satisfaction surveys—qualitative but predictive of adoption.

Governance: Who Owns the TIP?

Centralized CTI teams often own the TIP; alternatively, platform engineering owns infrastructure while CTI owns content. Ambiguity breeds configuration drift. Document RACI matrices.

Security of the Intelligence Platform

TIPs contain sensitive partner data and internal incident artifacts. Apply least privilege, MFA, and segmentation—ironically, intelligence stores are high-value targets.

Disaster Recovery and Offline Mode

During provider outages, maintain cached IOC snapshots for critical blocking tiers—especially KEV-related CVE exploitation periods when attackers move fast.

Common Failure Modes

- Feed hoarding without dedupe or confidence scoring.

- Ignoring internal incidents as first-class intelligence.

- Letting blocklists grow without TTL—stale IPs harm users and reputation.

- Neglecting developer ergonomics—bad APIs doom automation.

Conclusion

A threat intelligence platform is only as valuable as the data quality and workflows wrapped around it. Invest in normalization, provenance, and measurable outcomes—then integrate specialized enrichment for network reputation to give analysts a complete picture. Platforms that emphasize analyst experience and transparent APIs win adoption; those that prioritize checkbox features without pipeline discipline become shelfware.

Search-optimized technical content should address architecture and operations—not only definitions—to match evaluation-stage queries in 2026.

Multi-Tenancy and MSSP Considerations

Managed security providers serving multiple clients need strict tenant isolation, per-customer handling rules, and billing-aware usage metering for API calls. Architecture reviews should validate cross-tenant leakage cannot occur via misconfigured search indices.

Machine Learning: Promises and Pitfalls

ML can cluster related domains or rank risky IPs, but models require governance, explainability, and periodic retraining on fresh adversarial examples—attackers adapt. Use ML as assistance, not sole adjudication, especially for blocking actions affecting availability.

Aligning with Zero Trust

Zero Trust architectures demand dynamic policy decisions using risk signals—TIP outputs can feed policy engines for conditional access (device risk + IP reputation). Integration patterns differ from legacy firewall-only workflows; plan identity and device signals alongside network IOCs.

Community Feeds and Legal Review

Ingesting community feeds may introduce unreliable or legally sensitive data—review terms of use and handling restrictions before auto-blocking.

Tabletop: TIP Outage During Active Incident

Exercise workflows when the TIP is unavailable: cached enrichments, manual pivot procedures, escalation paths. Discover gaps before real crises.

Training and Certification Paths

Platform-specific training accelerates value realization; combine with vendor-neutral CTI courses for analytical rigor.

Future: Real-Time Graph Analytics

Graph databases linking IOCs, infrastructure, and threat actor aliases enable advanced hunting—budget for skilled data engineers if pursuing this path.

Final Checklist for Procurement

- API completeness and documentation quality

- STIX/TAXII compliance level

- Dedupe and confidence features

- Workflow integrations (SOAR, ITSM)

- Performance at your data scale

- Exit strategy—data export formats

Bridging Intelligence to Executive Narratives

Export summarized campaign timelines from the TIP for leadership briefings—visual storytelling increases funding for CTI headcount more than raw IOC counts.

Sustainable Operations

Rotate analysts to avoid burnout; celebrate wins when enriched intelligence prevents incidents—visibility sustains morale.

Schema Evolution and Versioning

STIX and vendor schemas evolve. Implement contract tests when upstream feeds change field meanings—silent breakage is worse than loud failure. Version your internal normalizers and document upgrade windows so downstream SOAR workflows adjust in sync.

Handling Conflicting Intelligence

Two reputable sources may disagree on whether an IP is malicious. Record both perspectives with timestamps; escalate to human fusion analysts. Automated “last writer wins” strategies propagate errors. Some teams maintain dispute queues reviewed weekly—SEO-friendly internal wikis capture resolution patterns for repeat conflicts.

Performance Engineering at Scale

Batch enrichment jobs should parallelize responsibly to respect provider rate limits; implement token buckets and prioritize KEV-related investigations during widespread exploitation events. Observability dashboards must show queue depth—when backlogs grow, leadership decides whether to scale workers or shed noncritical feeds temporarily.

Role-Based Dashboards

Executives need risk summaries; hunters need pivot graphs; SOC managers need SLA dashboards. One-size TIP homepages frustrate everyone. Customize views per persona to drive adoption—adoption drives ROI narratives in renewal conversations.

Integrating File Intelligence

TIPs often store file hash observations alongside network IOCs. Ensure malware analysts can attach sandbox reports and YARA rule references to indicator objects—richer objects propagate better context to detection engineering than bare hash strings.

Collaboration Features and Audit Trails

Comments, tasks, and immutable audit logs support post-incident reviews and compliance evidence. When customers ask “who approved this blocklist change?”, answers should be seconds away.

Cost Control Through Feed Rationalization

Quarterly reviews should eliminate redundant paid feeds and renegotiate bundles. Plot cost per unique high-confidence IOC contributed—surprisingly effective for finance discussions.

Open Standards and Interoperability

Favor vendors committed to STIX exports without proprietary lock-in—even if you stay for years, optionality strengthens negotiating positions and disaster recovery planning.

Purple Team Feedback Into the TIP

After exercises, upload simulated IOCs (clearly tagged as test) to validate pipelines do not accidentally block production when flags mis-set. Clean up test data aggressively.

Threat Intelligence as a Product

Treat internal TIP consumers as customers: gather feature requests, publish roadmaps, and communicate downtime. Product discipline increases satisfaction more than sporadic tool training sessions.

Regional Data Residency

Multinationals may need regional deployments to satisfy data localization—architecture diagrams should depict data flows crossing borders for legal review.

Analyst Productivity Metrics Done Right

Measure outcomes, not vanity: time saved per investigation, not merely “searches performed.” Pair quantitative metrics with qualitative interviews quarterly.

Knowledge Base and Self-Service

Reduce repetitive tier-two questions by maintaining searchable FAQs on feed quirks, parsing limitations, and escalation paths—SEO applies internally too: good search reduces Slack interruptions.

When to Consider a Data Lake Instead

Some hyperscale teams ingest raw feeds into a data lake with Spark/Flink processing before TIP distribution—flexible but engineering-heavy. Choose based on team skills and data volume; midsize enterprises often succeed with managed TIPs plus selective custom pipelines.

Closing Perspective

Platforms amplify process. Invest in people and procedures first; then select technology that fits—not the reverse. Threat intelligence maturity is measured in decisions improved, not dashboards opened.

Revisit architecture reviews after major vendor releases—platform capabilities shift quickly, and stale diagrams mislead new hires during onboarding.

Frequently asked questions

- What is a threat intelligence platform (TIP)?

- A TIP aggregates threat data from multiple sources, helps analysts enrich and correlate indicators, and distributes intelligence to security controls like SIEM, SOAR, firewalls, and EDR via APIs or standards like STIX/TAXII.

- Why does data quality matter more than feed volume?

- Noisy or duplicate indicators waste analyst time, increase false positives, and erode trust in automation. High-signal feeds with metadata, confidence scores, and clear sourcing outperform large but stale blocklists.

- Should organizations build or buy a TIP?

- It depends on scale, existing data platforms, and staffing. Many teams start with SIEM enrichment and evolve toward dedicated TIPs when feed count and cross-team sharing complexity grow.

Related articles

Apr 30, 2026Threat Intelligence Risk Scoring: How to Calibrate Reputation, Reduce False Positives, and Defend Your Decisions

Apr 30, 2026Threat Intelligence Risk Scoring: How to Calibrate Reputation, Reduce False Positives, and Defend Your DecisionsA noisy score is worse than no score. Learn what makes a reputation model trustworthy, how to combine multi-source evidence, and how to communicate uncertainty to your SOC and your executives.

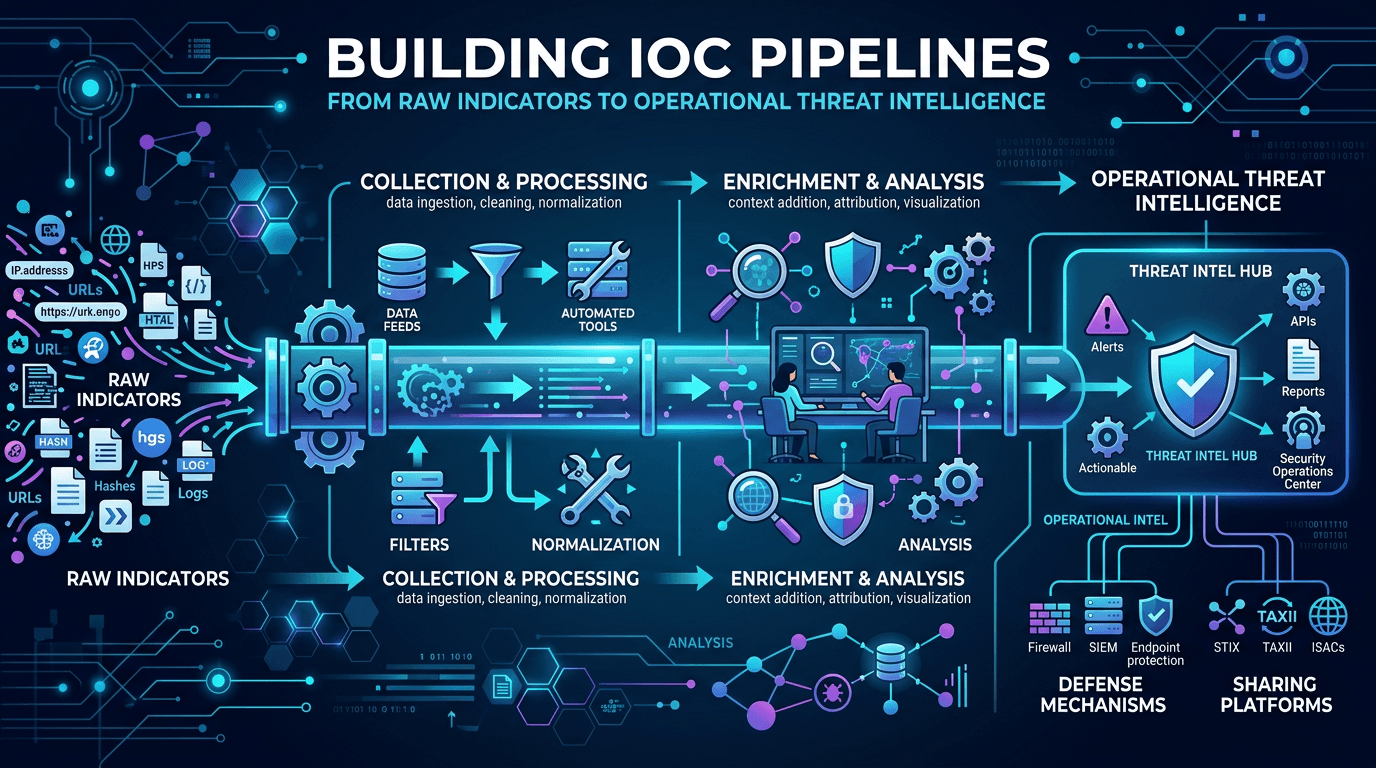

Apr 26, 2026Building IOC Pipelines: From Raw Indicators to Operational Threat Intelligence in 2026

Apr 26, 2026Building IOC Pipelines: From Raw Indicators to Operational Threat Intelligence in 2026A practical engineering guide to building indicator of compromise (IOC) pipelines—ingestion, normalization, deduplication, enrichment, scoring, distribution, and feedback—to turn raw threat feeds into operational defense.

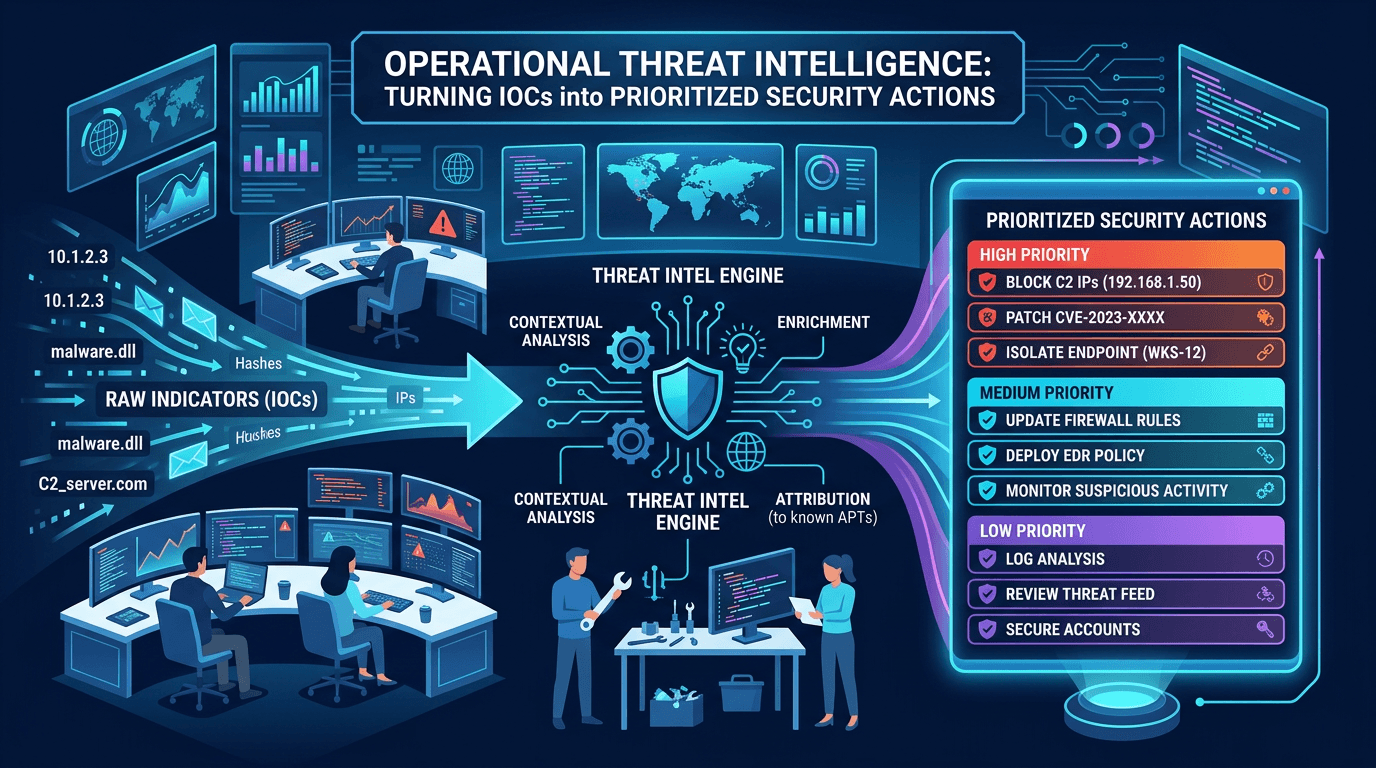

Apr 19, 2026Operational Threat Intelligence: Turning IOCs into Prioritized Security Actions

Apr 19, 2026Operational Threat Intelligence: Turning IOCs into Prioritized Security ActionsDefine operational CTI that SOC teams can use daily: IOC lifecycle, confidence scoring, feed hygiene, and how to align indicators with detection engineering and incident response.

Protect Your Infrastructure

Check any IP or domain against our threat intelligence database with 500M+ records.

Try the IP / Domain Checker