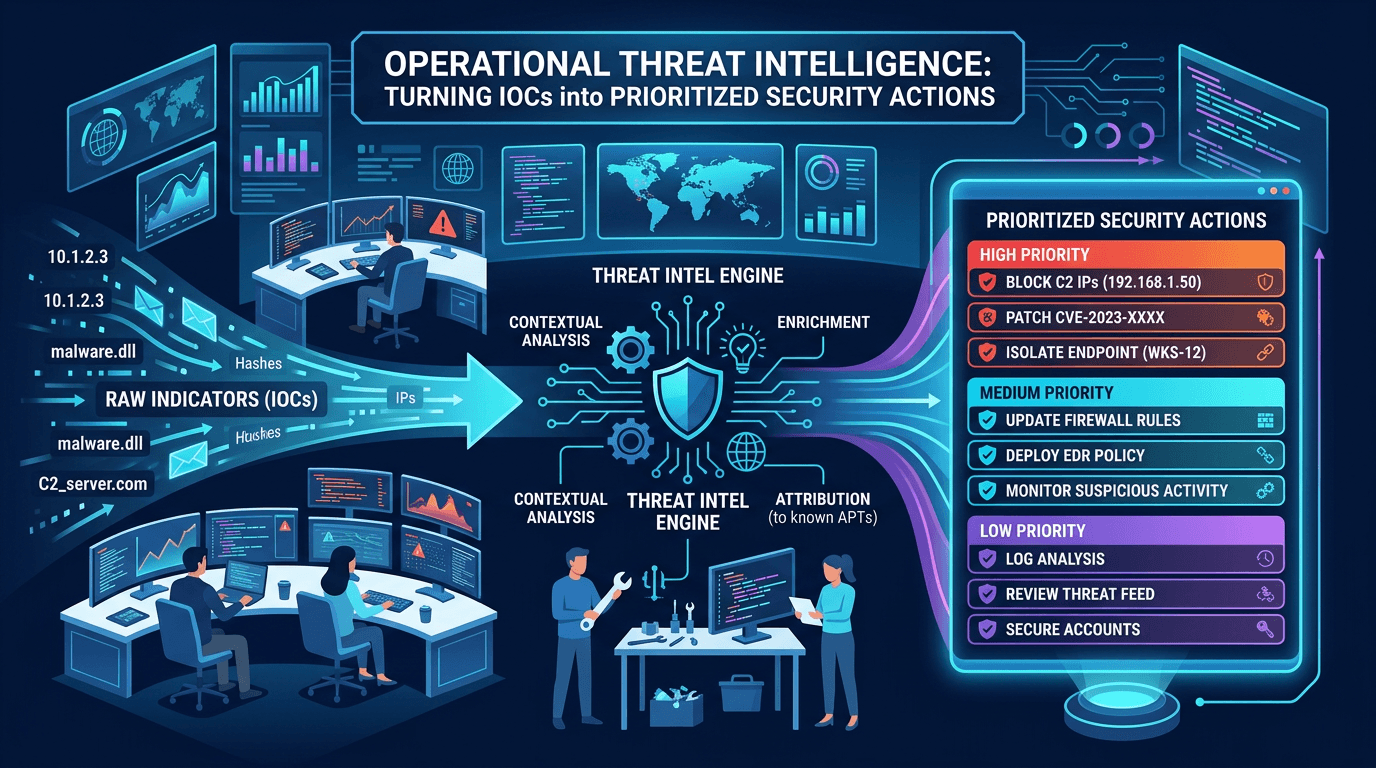

Operational Threat Intelligence: Turning IOCs into Prioritized Security Actions

Define operational CTI that SOC teams can use daily: IOC lifecycle, confidence scoring, feed hygiene, and how to align indicators with detection engineering and incident response.

Threat intelligence is a broad discipline spanning geopolitical analysis, vulnerability forecasting, and dark-web monitoring. Yet most security operations centers live or die on operational threat intelligence—the slice of CTI that answers, “What should we block, alert on, or hunt for right now?” Without operationalization, intelligence is merely interesting reading. This guide defines what high-signal operational intelligence looks like, how to prioritize indicators of compromise (IOCs), and how to integrate reputation data for IPs, domains, and URLs with workflows your analysts already use.

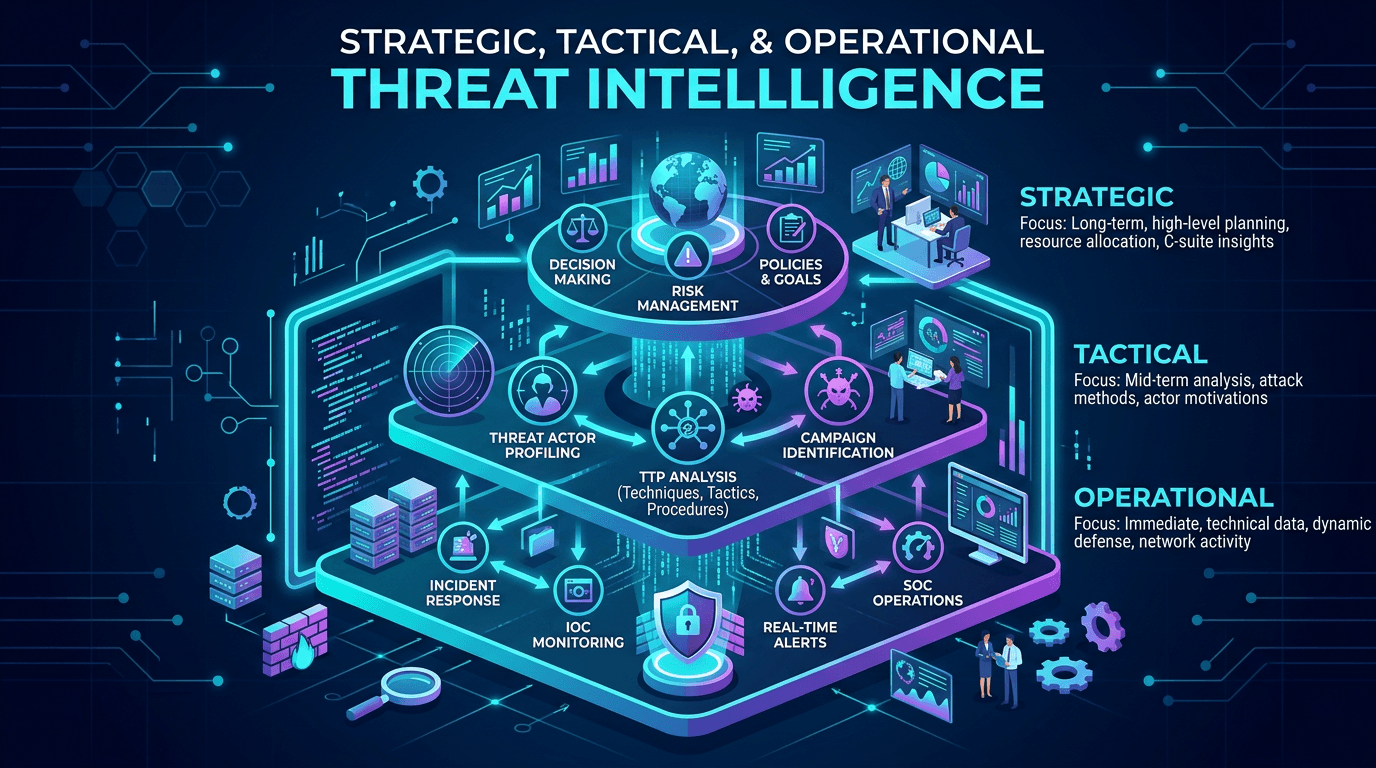

The Three Levels of Threat Intelligence (and Where Operational Fits)

Industry frameworks often describe three layers:

- Strategic intelligence supports executive decisions: trends in ransomware economics, regulatory shifts, nation-state posture toward your sector.

- Tactical intelligence translates actor goals and campaigns into techniques—often mapped to MITRE ATT&CK—helping blue teams understand how adversaries operate.

- Operational intelligence delivers concrete artifacts and short-lived facts: malicious IP addresses, domain names, phishing URLs, file hashes, and YARA/Sigma rule updates.

SOC managers searching for “operational threat intelligence examples” or “IOC prioritization” are usually trying to fix alert fatigue or prove ROI on data purchases. The fix is rarely “more feeds”; it is better governance of the feeds you already have.

What Makes an IOC Actionable?

An indicator is not automatically actionable. Consider a malicious domain used for command-and-control (C2):

- Actionable when your DNS logs cover internal clients, the domain is still registered, and your firewall can block outbound connections.

- Weakly actionable when the indicator is a file hash for a legitimate dual-use tool unless paired with suspicious execution context.

- Stale when the infrastructure was sinkholed months ago and your telemetry shows zero attempts.

Operational teams should attach metadata: first seen, last seen, prevalence, diamond model phase (reconnaissance versus exfiltration), and confidence (reported by the source or derived from corroboration). Modern STIX representations encode some of this structure; whether your tools consume it is another matter.

Confidence Scoring and False Positives

Feeds that label everything “malicious” without gradation force analysts to treat alerts as binary. A better model uses tiers:

- High confidence: multiple independent sources, aligned with internal sightings, matches known threat actor infrastructure patterns.

- Medium confidence: single reputable source, plausible but not yet observed internally.

- Low confidence: noisy community submissions, possible false positives, requires corroboration before blocking.

Tune automated blocking to high-confidence tiers only; route medium-confidence indicators to detection rules that favor alerting over hard blocks. Low-confidence items belong in hunt hypotheses or research queues—not production blocklists.

Feed Hygiene: Deduplication, Expiration, and Overlap

Operational threat intelligence programs collapse under duplicate IOCs. The same phishing domain may appear across ten feeds with slightly different naming. Deduplicate using normalized forms: punycode for internationalized domains, lowercase labels, and canonical IP representations.

Expiration matters. IPs change hands; domains expire and return to the pool clean. Maintain time-to-live (TTL) policies that retire indicators after inactivity. Permanent lists without decay poison models and train staff to ignore alerts.

Overlap analysis helps you understand vendor value. If two paid feeds contribute identical IOC sets, you may reallocate budget toward enrichment or staffing rather than redundant data.

Mapping IOCs to Detection Engineering

Operational intelligence only delivers value when detection engineers translate it into durable logic:

- Network IOCs become firewall rules, DNS sinkhole responses, proxy block categories, and Zeek/Suricata signatures.

- Host IOCs become EDR queries, registry watchers, and file integrity monitors.

- Email IOCs become transport rules and header anomaly detectors.

The handoff should include test cases: sample benign traffic that must not match, and red-team validation that the rule fires on intended samples. Without that, “push IOCs to SIEM” becomes a backlog graveyard.

Enrichment: From Bare Indicators to Context-Rich Alerts

An alert that says “connection to bad IP” wastes analyst time. Enrichment adds autonomous system ownership, geolocation, passive DNS history, related domains, and known malware families. APIs that expose IP and domain reputation—such as those offered by isMalicious—reduce pivot time by centralizing multi-source scoring.

For SEO, buyers frequently compare “threat intelligence API enrichment latency” and “bulk lookup limits.” Technical posts should mention integration patterns (REST, streaming, batch) because those keywords match late-stage evaluation searches.

Operational Intelligence and Incident Response

During active incidents, operational CTI accelerates containment:

- Identify lateral movement IPs seen in peer organizations’ breach reports.

- Block emergent phishing domains registered hours ago.

- Share newly discovered file hashes with partners through trusted channels.

Post-incident, feed newly validated IOCs back into your threat library with higher confidence and documented provenance. That feedback loop distinguishes mature programs from one-way ingestion.

Measuring Operational CTI Program Health

Track metrics aligned with outcomes:

- Mean time to block for high-confidence IOCs after publication.

- Alert true positive rate for intelligence-driven detections.

- Hunt yield: validated findings per hunt hour when using operational reports.

- Coverage: percentage of telemetry sources (DNS, proxy, email) actually consulted during investigations.

Avoid vanity metrics like “IOCs ingested per day” unless you can show corresponding detection coverage.

People, Process, and the Analyst Interface

Tools alone do not operationalize intelligence. Define roles:

- Intel analysts curate feeds, score confidence, and brief stakeholders.

- Detection engineers implement and tune rules.

- Incident responders validate real-world sightings and adjust tiers.

Analysts need interfaces where they can escalate an internal finding to “enterprise block” status with audit trails—without opening tickets across five systems.

Threat Intelligence in Regulated Environments

Financial services, healthcare, and critical infrastructure often participate in ISACs and government sharing programs. Operational requirements may include classified or restricted distribution lists. Your technical documentation should capture handling instructions so engineers do not accidentally paste sensitive IOCs into public ticket comments.

Common Failure Modes

- Feed sprawl: dozens of overlapping sources with no owner.

- No retirement policy: stale IOCs accumulate forever.

- Ignoring internal intelligence: your own phishing simulations and malware samples outperform generic feeds when curated.

- Treating tactical TTP reports as blocking lists: MITRE technique numbers are not directly blockable without translation to telemetry.

Integrating Vulnerability Intelligence

Operational programs strengthen when linked to CVE exploitation chatter. If threat actors weaponize a vulnerability in your stack, operational intel may surface scanning IPs, exploit file hashes, or attacker infrastructure used in follow-on phases. Combine EPSS-driven patch urgency with network IOC blocking while patches deploy.

Purple Team Exercises That Stress-Test Intelligence Consumption

Tabletop scenarios validate whether operational CTI truly reaches controls. Run quarterly exercises where red operators emulate published TTPs while blue teams must detect using only indicators and reports you claim to operationalize. Gaps reveal broken pipelines—for example, IOCs sitting unused in a threat platform because nobody mapped them to Zeek scripts. Document remediation as engineering tickets, not vague “improve intel” notes.

Publish anonymized lessons learned internally; those write-ups become training material for new hires and improve institutional memory beyond individual analysts.

Vendor Evaluation Criteria Buyers Actually Search For

Procurement teams compare threat intelligence vendors using checklists that map well to SEO clusters: API rate limits, STIX/TAXII support, historical passive DNS depth, false positive handling, data residency, and mean-time-to-ingest for emergent campaigns. When authoring content, spell out evaluation criteria explicitly—practitioners paste those sections into RFPs.

Ask vendors how they differentiate compromised infrastructure from intentionally malicious registrations; both may appear “bad” but imply different response playbooks (victim notification versus blocking). Nuanced vendor responses correlate with fewer surprise escalations after purchase.

Playbooks: When an IOC Hits, What Happens Next?

Operational intelligence matures when every major IOC class has a playbook: phishing domain observed internally, outbound connection to a known C2 IP, rare file hash execution on a workstation without a corporate signer. Playbooks should list enrichment steps, containment options, communication templates, and evidence preservation—especially where legal may require disclosure.

Link playbooks to ticketing categories so metrics roll up automatically. Without that linkage, leadership sees alert volume but not process adherence.

Threat Hunting Powered by Operational Reports

Hunting is not random searching; it is hypothesis-driven exploration informed by intelligence. When a sector report describes a new loader family, translate indicators into hunts across your telemetry even before alerts fire. Successful hunts should produce new detection logic—not just one-off findings.

Knowledge Management and Search

Store curated intelligence summaries in a searchable internal wiki with consistent tagging (threat-actor, sector, technique-id). Analysts troubleshooting similar future cases benefit from prior write-ups. Externally, your public blog can host sanitized versions of those insights to capture organic traffic for long-tail queries like “IOC prioritization framework” or “operational CTI metrics.”

Conclusion

Operational threat intelligence is the bridge between global adversary activity and the concrete work of SOC analysts. Success depends on curated IOC lifecycles, confidence scoring, enrichment, and relentless feedback from incidents into feed quality. When organizations treat operational CTI as a detection product—with owners, SLAs, and measurable outcomes—they stop drowning in data and start reducing mean time to detect and respond.

Long-form SEO content wins when it addresses practitioner pain directly: prioritization, false positives, integration, and metrics. Articles that do that earn sustained organic traffic from teams building business cases for platforms and APIs.

Looking Ahead: Automation and Responsible AI

Automation can cluster related domains, summarize open-source reports, and suggest detection candidates. Human review remains essential to prevent over-blocking and to handle geopolitically sensitive attribution carefully. Document how automated suggestions are validated—auditors and customers increasingly ask.

Operational excellence also means testing resilience: if your intel provider has an outage, can you still respond using cached reputation data and local analytics? Maintain offline snapshots of critical IOC sets for continuity.

Finally, invest in cross-training so SOC tier-one analysts understand the difference between a file hash alert and a domain reputation alert—each implies different investigative steps. Shared vocabulary reduces handoff friction and improves customer-facing incident narratives.

Frequently asked questions

- What is operational threat intelligence?

- Operational threat intelligence is timely, actionable information that helps defenders detect, investigate, and respond to threats—often expressed as IOCs, TTP summaries, and detection guidance relevant on a tactical timescale of days to weeks.

- How is operational intelligence different from strategic intelligence?

- Strategic intelligence informs long-term risk and investment decisions. Operational intelligence focuses on immediate defensive actions—blocking, detecting, hunting—while tactical intelligence often bridges actor profiling with specific techniques.

- Why do SOC teams struggle with threat feeds?

- Feeds fail when they lack context, duplicate entries, conflict with internal baselines, or are not mapped to detection logic. Success requires curation, confidence scoring, and feedback loops from incidents back into feed quality.

Related articles

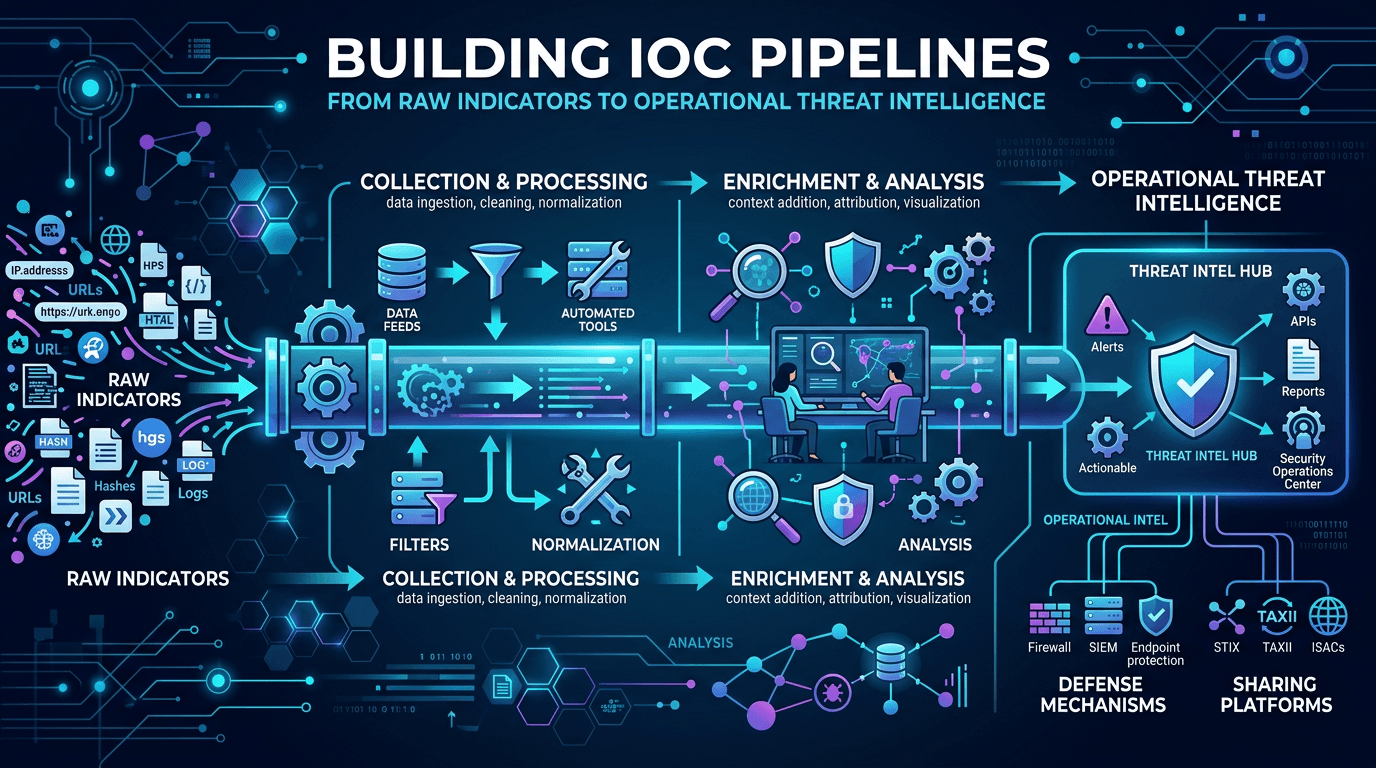

Apr 26, 2026Building IOC Pipelines: From Raw Indicators to Operational Threat Intelligence in 2026

Apr 26, 2026Building IOC Pipelines: From Raw Indicators to Operational Threat Intelligence in 2026A practical engineering guide to building indicator of compromise (IOC) pipelines—ingestion, normalization, deduplication, enrichment, scoring, distribution, and feedback—to turn raw threat feeds into operational defense.

Apr 23, 2026Strategic, Tactical, and Operational Threat Intelligence: Frameworks for Modern Security Programs

Apr 23, 2026Strategic, Tactical, and Operational Threat Intelligence: Frameworks for Modern Security ProgramsAlign CTI outputs with audience needs: executive risk narratives, SOC-ready IOCs, and MITRE-mapped TTPs—plus governance models that keep intelligence timely and measurable.

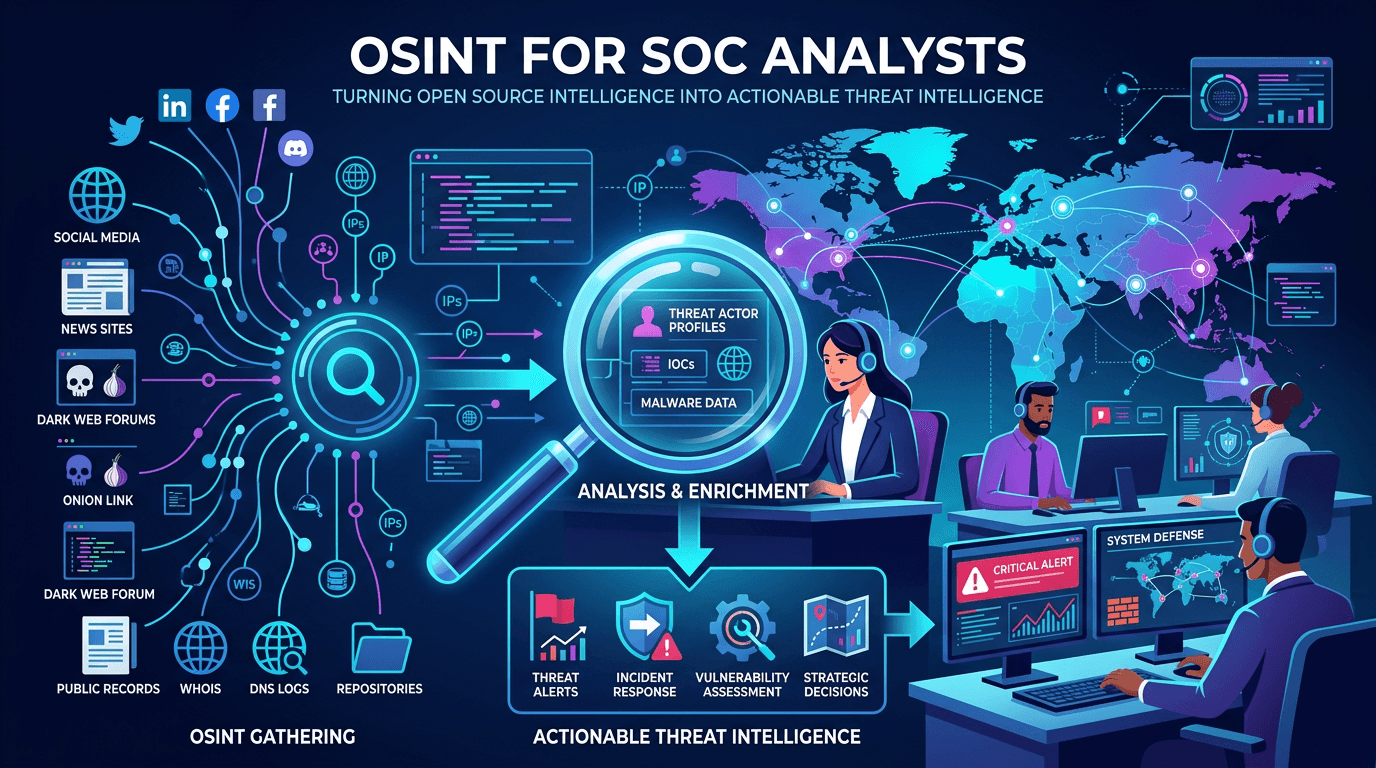

Apr 23, 2026OSINT for SOC Analysts: Turning Open Source Intelligence Into Actionable Threat Intelligence

Apr 23, 2026OSINT for SOC Analysts: Turning Open Source Intelligence Into Actionable Threat IntelligenceA complete guide to open source intelligence (OSINT) for security operations—tools, techniques, workflows, and legal considerations for collecting, analyzing, and operationalizing open threat data in a modern SOC.

Protect Your Infrastructure

Check any IP or domain against our threat intelligence database with 500M+ records.

Try the IP / Domain Checker