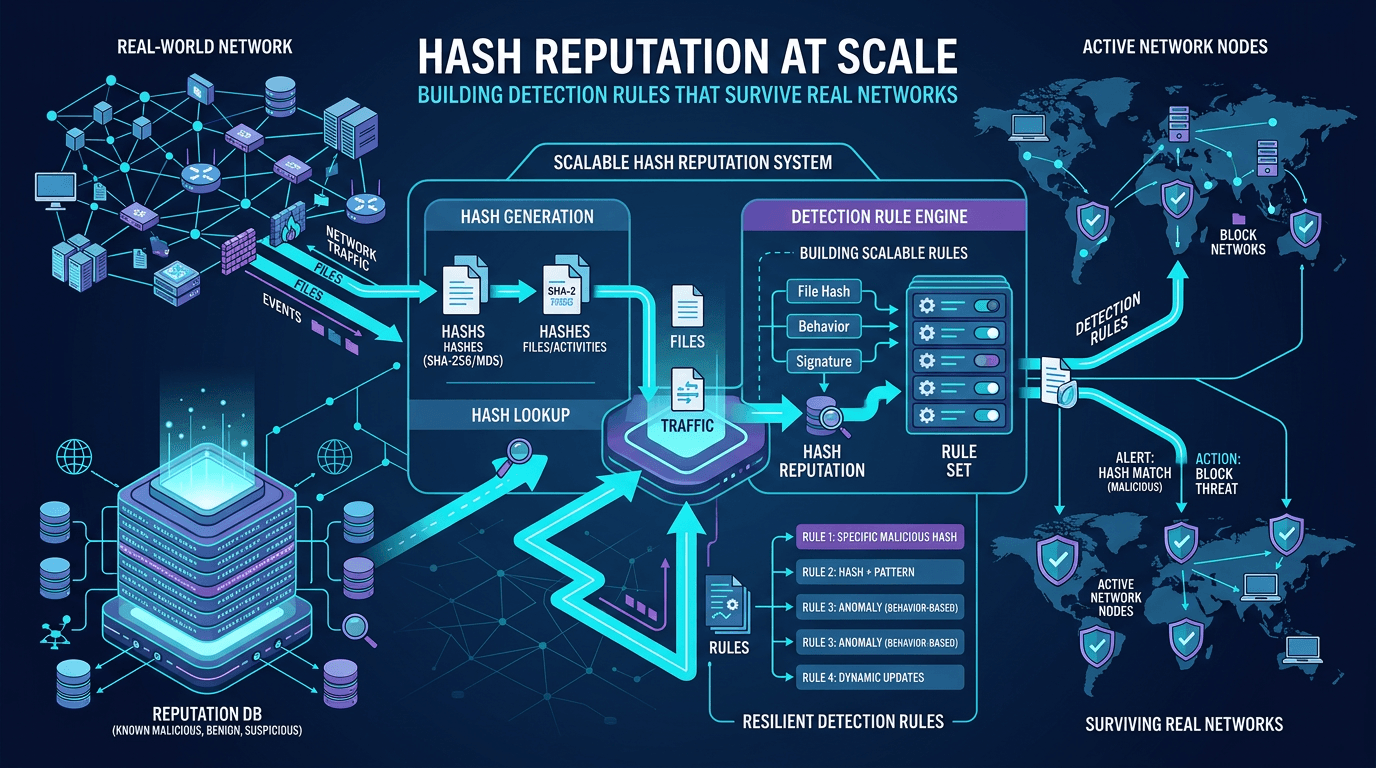

Hash Reputation at Scale: Building Detection Rules That Survive Real Networks

Move beyond one-off hash blocks: design reputation pipelines, reduce false positives, and integrate file intelligence with IP and domain context for enterprise-grade detection engineering.

File hash indicators are seductively simple: compute SHA-256, query a database, take action. At enterprise scale, simplicity becomes a trap. This guide explains how to implement hash reputation systems that remain accurate as your organization grows—integrating threat intelligence, tuning detection rules, and pairing static signals with IP and domain context from platforms like isMalicious.

The Lifecycle of a Hash Indicator

A file hash enters your ecosystem through many doors: EDR telemetry, email attachments, browser downloads, CI/CD artifact registries, or incident response image captures. Each observation should trigger:

- Normalization — canonical SHA-256 formatting, deduplication.

- Enrichment — reputation lookups, signer extraction, prevalence counts.

- Decisioning — alert, block, allowlist candidate, or hunt hypothesis.

- Feedback — analyst verdicts improve future automation.

Skipping steps turns SOC queues into endless “unknown hash” tickets without learning.

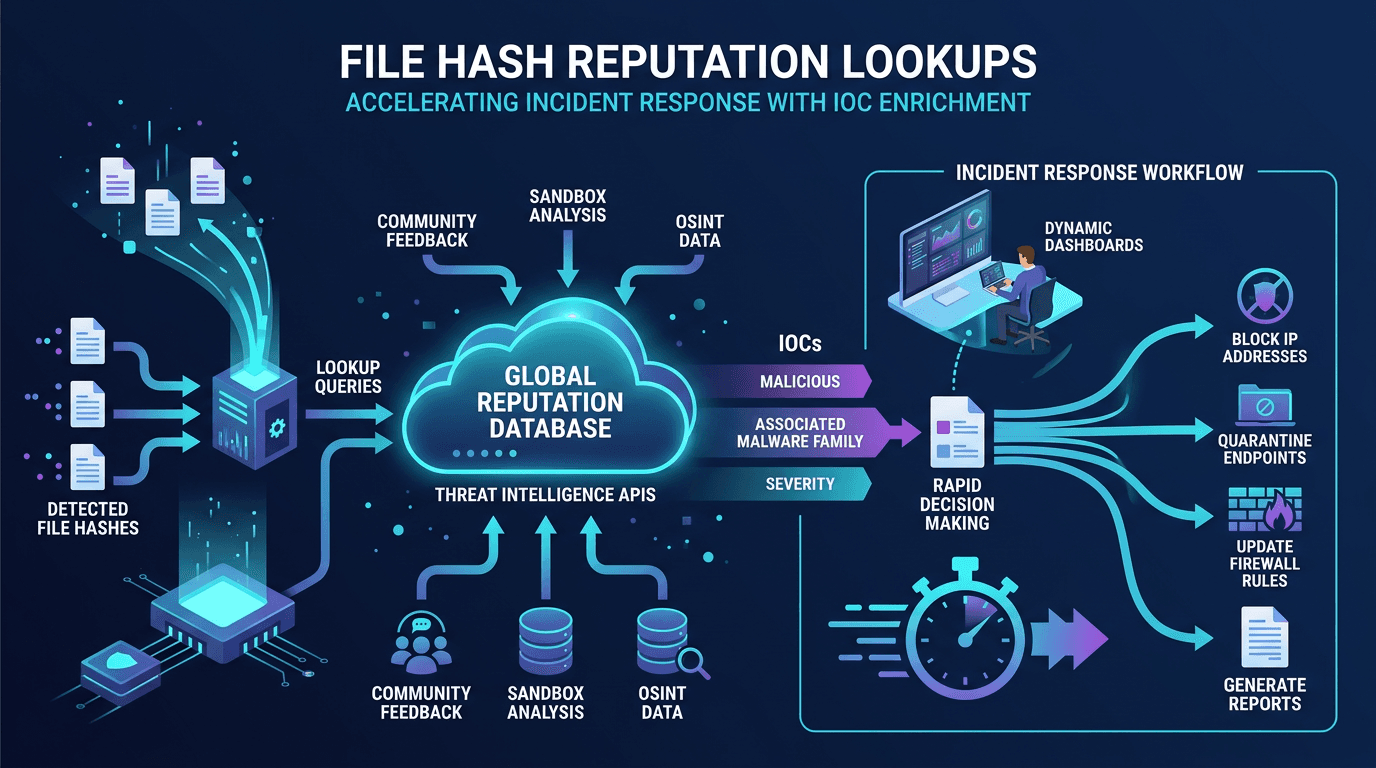

Reputation Sources: Feeds, Sandboxes, and Community Intel

Hash reputation aggregates labels from antivirus engines, sandbox detonations, researcher blogs, and peer sharing programs. Quality varies: commercial feeds offer SLAs and provenance; community lists may include stale or contested entries.

Implement source weighting: a match in multiple independent high-trust sources beats a single low-trust submission. Track confidence explicitly in your SOAR playbooks so tier-one analysts know whether to escalate automatically.

Prevalence and the Base Rate Problem

A rare hash on one machine might be benign niche software; the same rarity on five finance laptops within an hour suggests lateral movement staging. Prevalence analytics—global and organizational—transforms raw hash matches into situational alerts.

Vendors increasingly expose prevalence APIs; internal telemetry can compute “first seen in org” timestamps cheaply. SEO-minded documentation for security products should explain prevalence clearly—buyers compare this feature across tools during evaluations.

Reducing False Positives: Context Layers

Layered logic outperforms binary blocklists:

- Signer and publisher reputation — block unsigned where policy demands code signing.

- Path heuristics — user profile

DownloadsversusProgram Filesversus ephemeral%TEMP%launches. - Parent-child process graphs —

explorer.exedownloading then executing is common;winword.exespawning unexpected unsigned children is not. - Network correlation — simultaneous connections to low-reputation IPs or young domains increase confidence.

When writing detection rules, encode these layers as composite conditions, not isolated hash equality checks.

Allowlists: The Unsung Hero

Developers, auditors, and red teams generate “scary” binaries that are intentional. Without allowlists keyed by hash and signer and deployment channel, you will fight the same tickets weekly.

Govern allowlist changes: owners, expiry dates, and mandatory removal after projects conclude. Periodic audits prevent permanent exceptions that become undeclared supply chain risks.

Scale Challenges: Storage, Indexing, and Query Latency

Billions of file hashes exist. Architectural considerations:

- Bloom filters for quick “definitely not seen” negatives before expensive lookups.

- Sharding reputation databases by hash prefix.

- Edge caching for repeated lookups within short windows during outbreaks.

Latency-sensitive paths (mail gateways) may sacrifice deep enrichment for speed, pushing heavy analytics to asynchronous pipelines—document trade-offs so stakeholders understand why some alerts arrive minutes later with richer context.

Integrating Hash Intelligence with Network Telemetry

Malware seldom exists without communication. When a suspicious hash executes, automatically pivot to domain and IP reputation for live connections. isMalicious specializes in that network-centric view, complementing file-centric databases.

Cross-domain correlation rules example narrative: “Unsigned binary with low global prevalence AND outbound DNS queries to domain registered <24h AND IP flagged as high risk” → high-severity incident.

Detection-as-Code and Version Control

Store detection rules—Sigma, YARA, EQL—in Git repositories with pull requests and tests. When hash lists update, CI should validate syntax and simulate sample logs. This discipline scales better than editing rules in vendor GUIs without history.

Measuring Rule Health

Per-rule metrics:

- True positive rate and false positive rate over rolling windows.

- Median time to triage for alerts referencing the rule.

- Coverage: percentage of endpoints reporting hash telemetry needed for the rule to fire reliably.

Retire noisy rules rather than letting them erode SOC trust.

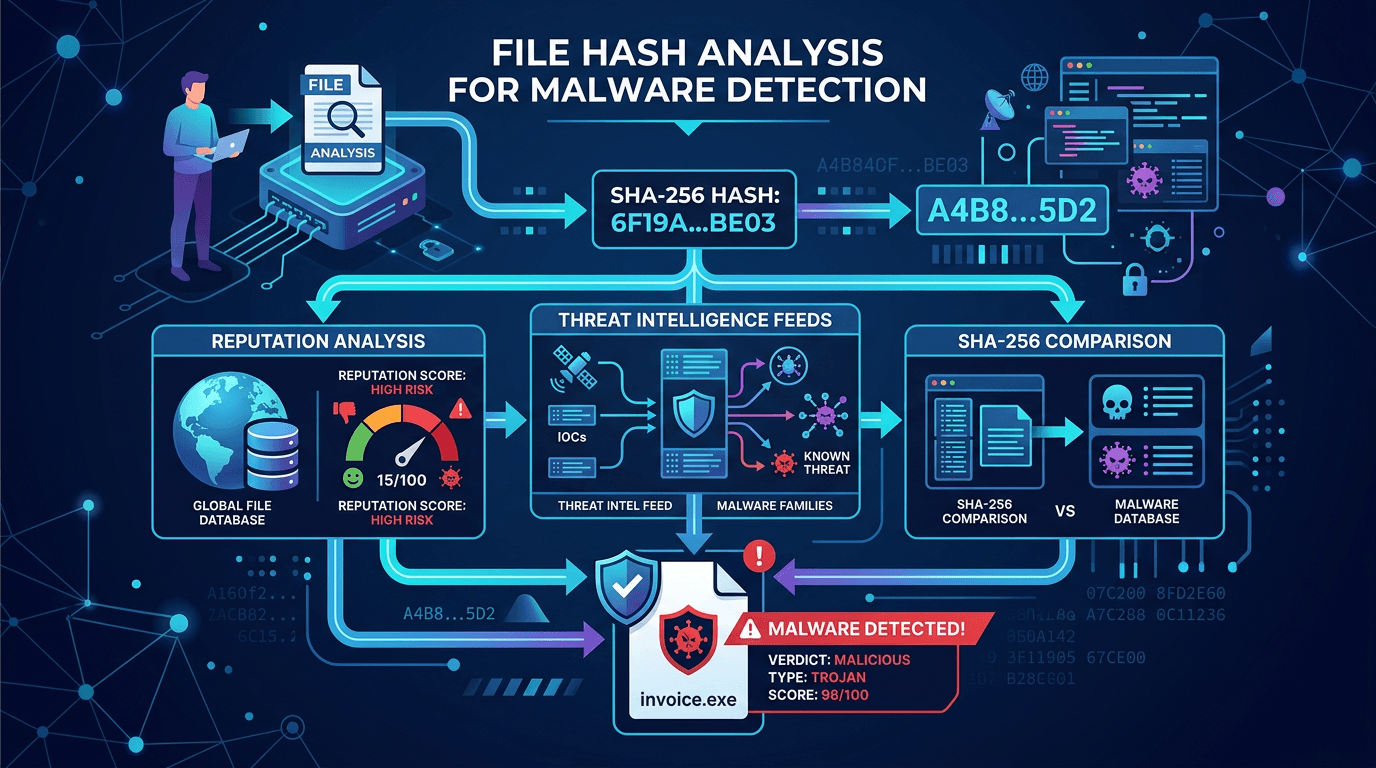

Threat Actor Campaigns and Hash Families

Some threat actors reuse builder kits producing structurally similar samples. Fuzzy hashing (ssdeep) and import table similarity clustering can catch variants when exact SHA-256 matches fail. Combine fuzzy techniques cautiously—tuning thresholds avoids accidental blocking of legitimate software clusters.

Incident Response: Hash Collections as Evidence

IR teams export hash lists from compromised hosts to compare against golden images. Consistent hashing algorithms across tools prevent reconciliation nightmares during legal discovery.

Regulatory and Privacy Angles

Retention policies for file hash observations may intersect with employee monitoring regulations. Transparent acceptable-use and monitoring notices matter—especially in EU jurisdictions with strict workplace privacy interpretations.

Purple Team Validation

Red operators introduce custom binaries with unknown hashes to verify blue detections catch behavior when reputation data is empty. If defenses fail open, prioritize behavioral analytics upgrades over expanding static lists.

Economic Realities: Feed Costs versus Staff Time

Cheaper feeds with high noise can cost more in analyst hours than premium feeds with curation. Model total cost of ownership when budgeting; SEO articles that discuss TCO resonate with finance-conscious buyers.

Roadmap: Moving from Reactive to Predictive

Machine learning models can rank likelihood of maliciousness for new hashes using features beyond strings—entropy, section counts, compiler fingerprints. Such models require governance to prevent discriminatory or opaque decisions; explainability features matter for regulated industries.

Knowledge Base Design for Internal Support

Create internal articles answering frequent questions: “Why was this hash blocked?” with links to reputation sources and appeal processes. Support teams resolve user inquiries faster, reducing escalations to senior analysts.

Conclusion

Hash reputation is foundational, not sufficient. Build pipelines that enrich file hashes with prevalence, signer context, and network intelligence; version your detection rules; and measure outcomes continuously. Teams that operationalize this layered approach block more real threats with fewer business disruptions—and that is the operational definition of scale.

For organic search, pair technical depth with implementation checklists; practitioners bookmark actionable references longer than purely definitional glossaries.

Extended Operations: Rotating Indicators and Campaign Tracking

During large-scale phishing or ransomware waves, hash indicators rotate hourly. Track campaigns by clustering metadata—email subjects, domain registration patterns, wallet addresses—rather than individual hashes alone. Your threat intelligence function should publish campaign-level briefings so SOC staff understand the story behind atomic IOCs.

Collaboration with Development and Release Engineering

CI systems should automatically register SHA-256 values for released artifacts into internal trust stores. That registration prevents future false positives when EDR flags your own installers after an update. Treat release hashes as part of the software bill of materials handoff—especially when supply chain concerns dominate headlines.

SOAR Playbooks: From Hash Match to Containment

Automated response should branch on asset sensitivity. A malicious hash on a generic kiosk may trigger isolation; the same indicator on a domain controller may require coordinated failover and executive notification. Encode branching in SOAR with human checkpoints for high-impact systems—full automation without guardrails risks operational outages.

Include enrichment calls in playbooks: after hash confirmation, fetch related CVE exploit modules if applicable, and query IP/domain IOCs from active network sessions. Playbooks that stop after the first IOC miss containment opportunities.

YARA Versus Hash Rules: Choosing the Right Primitive

YARA rules match byte patterns and textual strings; hash rules match exact content. Use YARA when families share code regions but rotate hashes constantly. Use hash blocklists when samples are stable or when you must prove exact file identity for legal evidence. Many mature programs deploy both: YARA for discovery, hash lists for deterministic blocking after analyst confirmation.

Document performance implications—poorly written YARA can impact endpoints; test in representative hardware profiles.

Hash Pipelines in Email and Web Gateways

Mail security appliances compute hashes of attachments and URLs’ downloaded payloads. Latency budgets are tight; prioritize caching and asynchronous deep analysis for borderline verdicts. Publish internal SLAs so messaging teams understand why some messages deliver slightly delayed when sandboxing triggers.

Threat Intelligence Producer–Consumer Contracts

If your organization produces hash IOCs internally (from malware reverse engineering), publish metadata: source system, collection time, analyst confidence, and whether the sample relates to an active threat actor campaign. Consumers downstream can tune severity—SEO for internal portals matters less than consistency, but structured fields enable automation.

Handling Conflicting Verdicts Across Vendors

Antivirus engines disagree. Establish arbitration policies: majority vote, tie-breaker vendor list, or manual review thresholds. Without policies, tier-one analysts debate endlessly during incidents. Periodically reconcile disagreements with fresh sandbox runs—behavior breaks ties when static labels conflict.

Tabletop: Hash Alert Storm Simulation

Simulate ten thousand benign installer updates during business hours to observe alert volume—many enterprises discover hash-centric rules cannot handle release cadence. Adjust rules before real patch Tuesday collisions.

Maturity Model for Hash-Centric Detection

Stage 1: Manual lookups. Stage 2: Automated enrichment. Stage 3: Composite detections with network context. Stage 4: Closed-loop learning from analyst feedback into supervised models. Stage 5: Cross-organizational sharing with STIX packages. Honest self-assessment guides investment sequencing.

Why Long-Form Guides Rank for “Hash Reputation” Queries

Searchers want architecture—not slogans. Articles that discuss indexing strategies, false positive handling, and integration with domain reputation answer commercial-intent queries from teams comparing vendors and designing internal platforms.

Closing the Loop with Vulnerability Response

Sometimes hash intelligence intersects CVE response: exploit binaries circulate with stable fingerprints until defenders widely block them. When patch deployment lags, temporary hash-based blocks on known weaponized samples reduce opportunistic script-kiddie reuse—even as advanced adversaries customize payloads. Pair temporary blocks with sunset dates tied to patch completion.

Analyst Training Notes

Train new SOC hires to record parent process trees and network flows whenever they disposition hash alerts—those notes become future hunt queries and improve composite detections. Good documentation habits multiply team leverage beyond individual shifts.

Small process improvements compound faster than buying another feed nobody operationalizes.

Frequently asked questions

- What is hash reputation?

- Hash reputation is the assessed trustworthiness or maliciousness of a file based on aggregated telemetry, multi-vendor scanning, prevalence data, and analyst labels tied to a cryptographic hash such as SHA-256.

- Why do hash-only detection rules cause operational pain?

- Malware authors change bytes to rotate hashes; legitimate software updates also change hashes constantly. Rules that fire on any unknown hash create noise. Context—signer, path, parent process, prevalence—reduces false positives.

- How should teams prioritize which hashes to block automatically?

- Use high-confidence intelligence with corroboration, focus on unsigned binaries in suspicious locations, and align automated blocks with change windows where possible. Maintain allowlists for internal builds and known tools.

Related articles

Apr 27, 2026ASN Reputation for Threat Intelligence: How Autonomous System Intelligence Improves Prioritization and Hunt Programs

Apr 27, 2026ASN Reputation for Threat Intelligence: How Autonomous System Intelligence Improves Prioritization and Hunt ProgramsAn IP address is a snapshot; an autonomous system (ASN) is a neighborhood. Learn how to use ASN context safely for triage, fraud, and security operations—without mistaking a giant cloud for a monolithic "bad host".

Apr 22, 2026File Hash Reputation Lookups: Accelerating Incident Response With IOC Enrichment

Apr 22, 2026File Hash Reputation Lookups: Accelerating Incident Response With IOC EnrichmentA practitioner's guide to file hash reputation lookups—how they work, which data sources power them, how to build automated IOC enrichment pipelines, and how to integrate hash intelligence into SOC, SOAR, and incident response workflows.

Apr 18, 2026File Hash Analysis for Malware Detection: SHA-256, Reputation, and Threat Intel Workflows

Apr 18, 2026File Hash Analysis for Malware Detection: SHA-256, Reputation, and Threat Intel WorkflowsLearn how cryptographic file hashes power malware identification, why SHA-256 dominates security tooling, and how to combine hash lookups with broader threat intelligence for fewer false positives.

Protect Your Infrastructure

Check any IP or domain against our threat intelligence database with 500M+ records.

Try the IP / Domain Checker