Security LLM and Agent Workflows: When (and How) to Check Malicious Domains, IPs, and URLs Before Acting

AI assistants in SOAR, IDEs, and browser extensions can exfiltrate data or run malicious code if they fetch the wrong link. This guide gives guardrails: schema for tool calls, policy tiers, and where threat intelligence checks belong in the loop.

Short answer: Any autonomous agent with outbound network access is a remote browser you did not mean to hand users. If it can follow a URL, you need reputation gating, sandboxed fetch, and a policy layer the LLM cannot override with clever prose.

For executive-level overview of how attackers abuse ML, read AI-powered cyberattacks: machine learning threats. This page is a practitioner companion focused on agent architectures.

The New Failure Mode: "The Assistant Fetched the Payload"

In classic SOAR, you trusted only your vetted playbooks. When you wrap those playbooks in an LLM that can read tickets, attackers can smuggle indirect instructions in ticket bodies, email bodies, and paste bins—plus the usual malicious links.

The mitigations are part prompt injection defense, and part network microsegmentation of tool calls.

A Reference Architecture: Model vs Tools vs Policy

| Layer | Responsibility | Fails if… | | --------------- | --------------------------------------------- | ---------------------------------------------------- | | Policy | who may fetch what, at what data sensitivity | you let the model call HTTP directly | | Tool server | performs URL normalization, allow/deny, fetch | you pass cookies or internal tokens into the fetcher | | Enrichment | returns structured verdicts, not long text | you trust a single no-name list with no recency | | Human | approves only high-impact actions | you skip approvals because "it was the AI" |

The enrichment layer is where a fast, multi-entity API can sit—IOC enrichment in depth and SIEM+SOAR object design are directly relevant if you are wiring this in enterprise tooling.

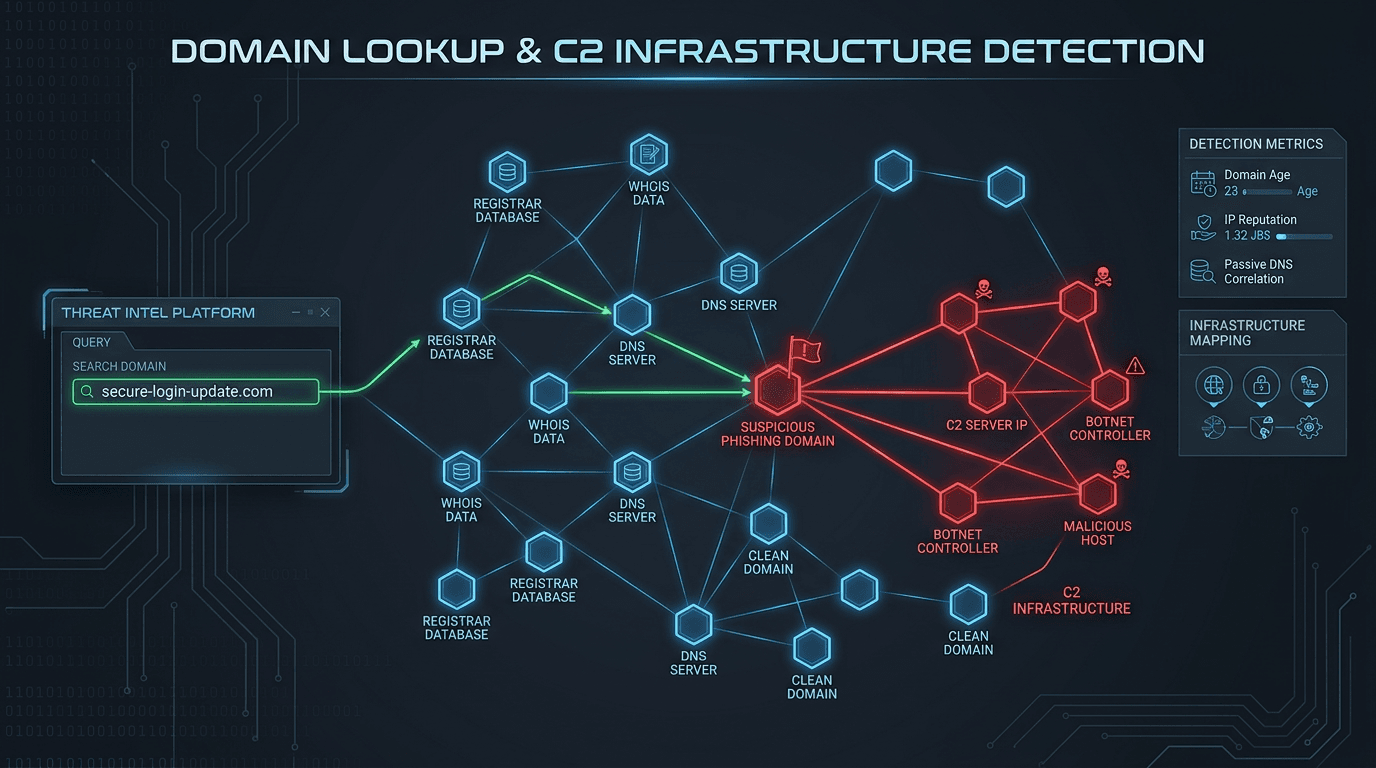

What to Do Before a Fetch: A Tiered Checklist

- Parse and canonicalize the URL (punycode, defang/refang) — a repeat of the lessons in IDN homograph attacks and how to read URLs safely

- Blocklist and allowlist your own domains, SSO, and helpdesk to prevent SSRF into internal services

- Reputation lookup the hostname and resolved IPs using an aggregated service like isMalicious, consistent with the IP/domain check guide

- If unknown: fetch only in a hardened headless profile with no corporate authentication and with download disabled by default

- If document: scan content types; never "open" with Office macros; treat archives as hot

LLM+SOC Playbooks: Design Rules That Do Not Melt

- Never pass raw user email to a browser automation step without a domain/IP gate

- Always show analysts the structured enrichment output, not a model paraphrase, before blocking network routes

- Log the tool chain: who triggered the runbook, which model version, which indicator list versions

The model can summarize—it should not be the only evidence.

The Human Parallel: AEO and Citations

If you want your public documentation to be well-cited by AI systems and humans alike, the editorial guidance in answer-engine optimization for security brands applies. Good external docs make internal tool policies easier to write because terms are not ambiguous.

Comparing to Pure Email Phishing (Still Relevant)

Many agent prompts will ingest a URL from an email. Your mail stack still needs DMARC, SPF, and DKIM discipline. Agents amplify risks because they act faster than people read footers.

Where isMalicious Fits

isMalicious is a practical reputation and enrichment layer in front of fetches, inline policy checks, and automated blocklists when the goal is a fast yes/no/maybe-with-friction answer across IPs, domains, URLs, and hashes. For capability boundaries vs VirusTotal, read isMalicious vs VirusTotal.

Operational tip: in agent frameworks, make check_indicator(observable) a tool with a strict schema and fixed timeouts, then compose the LLM after the tool result.

Bottom Line

The future of security automation is not "an LLM with root." It is a tight system where models propose and tools enforce—and threat intelligence is one of the tools that must be fast, structured, and explainable.

Validate indicators the same way your agents should: with the isMalicious IP / domain checker and, for automation, the APIs described in the threat API comparison for 2026.

Tool Schema Sketch (Pseudocode)

The important part is not the language; it is the invariants (timeouts, no cookies, and structured return types):

check_url(url) -> { normalized_url, host, resolved_ips[] }

reputation_for(host, ip?) -> { band, reasons[], as_of, sources_count }

decide(band, policy) -> { allow|challenge|block, audit_ref }

log_everything() -> // never skip this in agent flows

If the LLM is allowed to call http_fetch, it should call check_url first, then reputation_for, and only then a sandboxed fetcher that cannot access your SSO. This pairs naturally with the reputation-first mindset in IOC enrichment for SOC programs.

Red-Team the Agent, Not the Blog Post

- Paste a benign internal wiki URL into a test ticket. The agent should refuse to fetch without a route allowlist.

- Paste a known phishing link with a defanged

hxxpand mixed punycode. The normalizer should catch it, as discussed in the IDN homograph guide. - Try indirect prompt content inside a PDF hosted at a “clean” site that chains to a bad IP. The lesson: treat the whole chain as a threat, not the first text/plain response.

Why This is Also an SEO/AI-Answer Problem

The same clarity that makes your site retrievable in answer-engine optimization for security brands is the clarity that makes your playbooks and policies unambiguous to tools: explicit boundaries, no buzzwords, and public examples that match what your agent actually enforces. If the blog says “we never do X” and the runbook does X on Tuesdays, the agent will not save you.

Frequently asked questions

- Why do LLM agents need URL and domain checks?

- Agents may fetch user-supplied links, run tools, or call external APIs. Indirect prompt injection and malicious content hosted at plausible-looking domains can turn an “assistant” into a relay for malware downloads or data theft unless fetches are sandboxed, allowlisted, and checked against reputation data.

- Should the LLM "decide" if a URL is malicious?

- No. The model proposes; deterministic tools and policies enforce. A reputation API or a dedicated URL analysis sandbox should return structured facts that code—not prose—interprets.

- What is a minimal safe tool contract?

- Inputs: fully normalized URL; outputs: category, list hits, first/last seen, and a risk band; plus policy: block fetch, or fetch only in an isolated headless environment with no cookies or SSO tokens.

- How does this relate to application-layer LLM risks?

- You still need RAG hardening, output filtering, and auth boundaries. This article focuses on the network indicator side—pair it with the engineering perspective in this repo’s [LLM prompt injection and application security](/posts/injections-prompt-llm-securite-applicative) post.

- How can isMalicious be used in agentic workflows?

- Call isMalicious for fast IP, domain, URL, and hash reputation to gate automated browsing, enrichment steps, and SOAR "assistant" playbooks, matching the same API-first posture described in the [threat API comparison for 2026](/posts/best-threat-intelligence-api-comparison-2026).

Related articles

Jun 4, 2026AI-Enabled Cyberattacks and MITRE ATT&CK: Turning New Threat Maps Into SOC Action

Jun 4, 2026AI-Enabled Cyberattacks and MITRE ATT&CK: Turning New Threat Maps Into SOC ActionAI-enabled threats are being mapped into ATT&CK language, but mapping is only useful when it drives enrichment, detection, triage, and response workflows.

May 3, 2026Malicious Infrastructure Clustering: How Passive DNS, TLS Certificates, and ASNs Reveal Shared Campaigns

May 3, 2026Malicious Infrastructure Clustering: How Passive DNS, TLS Certificates, and ASNs Reveal Shared CampaignsA single C2 IP is a clue; shared signing patterns and DNS co-occurrence are a map. This guide explains how defenders cluster infrastructure without chasing ghosts—and how to document findings for IR, threat intel, and law enforcement handoffs.

May 1, 2026SIEM and SOAR Threat Intelligence Enrichment: Workflows, Field Mapping, and the Metrics That Keep Teams Sane

May 1, 2026SIEM and SOAR Threat Intelligence Enrichment: Workflows, Field Mapping, and the Metrics That Keep Teams SaneA SOAR playbook without enrichment is a ticket printer. A SIEM with unbounded threat feeds is a bill. Here is a practical way to design enrichment for Splunk, Sentinel, or Elastic-style stacks—what to store, when to run playbooks, and what to report upward.

Protect Your Infrastructure

Check any IP or domain against our threat intelligence database with 500M+ records.

Try the IP / Domain Checker