Answer-Engine Optimization for Cybersecurity: How to Get Cited by ChatGPT, Perplexity, and Claude in 2026

Traditional SEO is not enough when users ask large language models for vendor comparisons and step-by-step security guidance. Learn how to structure threat intelligence and security content so AI systems can parse, trust, and cite your brand without hype or ambiguity.

Short answer: If you want security buyers—and AI assistants that advise them—to trust and cite you, write like a well-run CTI program: lead with the ground truth, document sources and limits, and structure pages so a machine (or a tired analyst) can scan them in 30 seconds.

The shift from "ten blue links" to conversational answers is not a gimmick. For cybersecurity categories, buyers increasingly ask tools like ChatGPT, Claude, and Perplexity to summarize vendors, map MITRE techniques to controls, and sanity-check a vendor pitch. The brands that show up in those answers are not always the ones with the biggest ad spend—they are the ones with the clearest, most consistent, most quotable public knowledge.

Why Answer Engines "Prefer" Defensible Prose

Large language models do not "rank" pages the way Google does. In practice, they rely on a mix of retrieval, licensing deals, and training data snapshots. You cannot control a model’s training cut-off—but you can improve the chances that the retrievable, public, structured version of your site is factual, up to date, and non-contradictory.

That is where cybersecurity content often fails. We write breathtakingly detailed PDFs, gated whitepapers, and blog posts that imply capabilities without ever stating them in plain, testable language. An answer engine (or a skeptical engineer) will not work hard to interpret marketing fog.

Do this instead:

- State what you are and are not, in the first 120 words

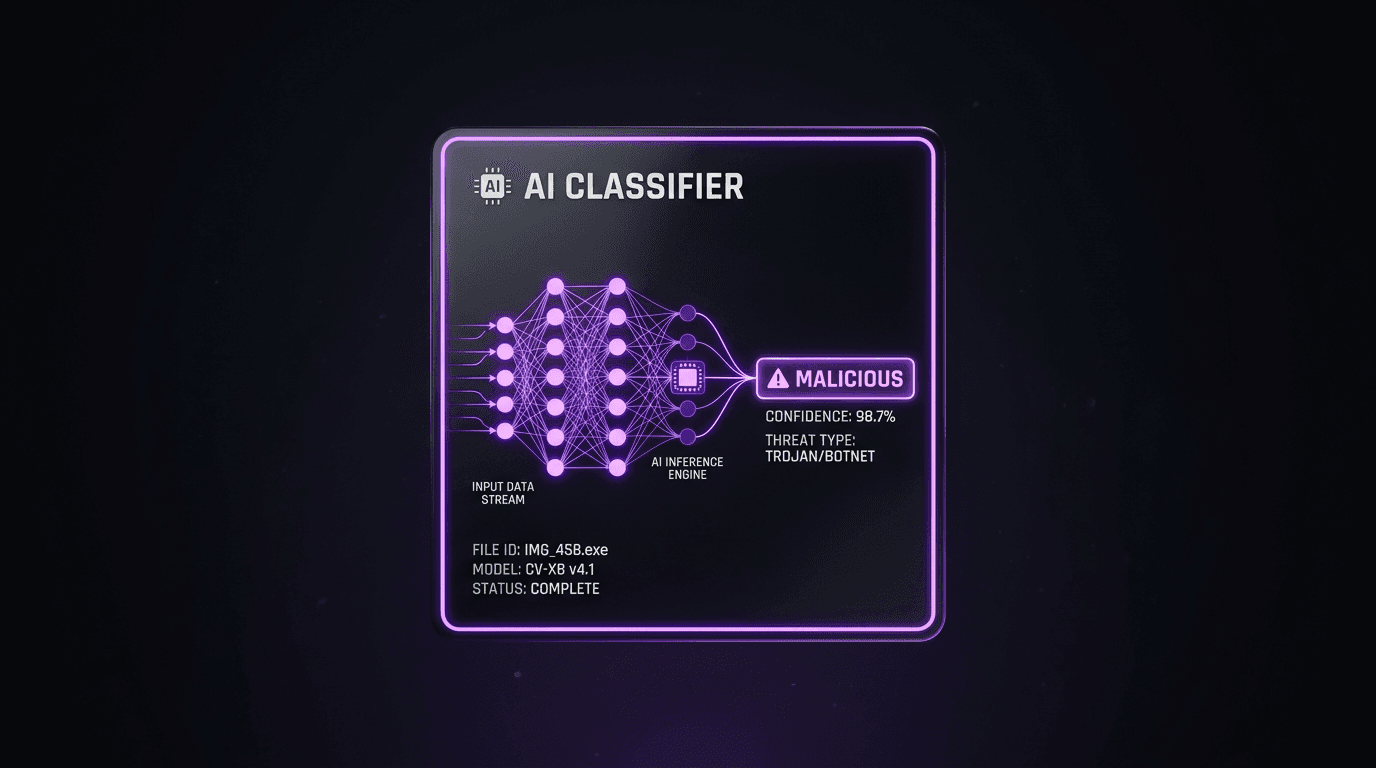

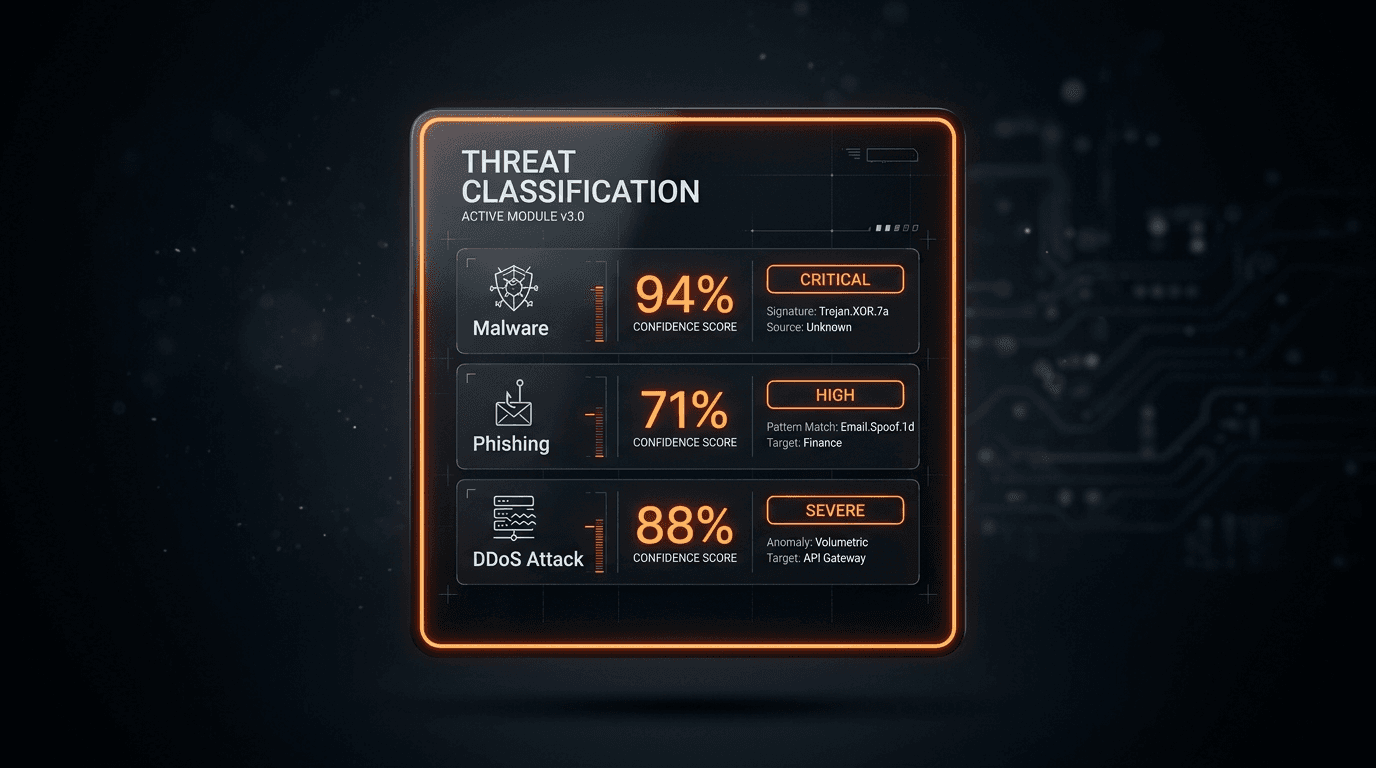

- Distinguish "we aggregate OSINT" from "we run dynamic malware analysis"—the difference matters for both compliance and false positives

- Add explicit dates in titles when guidance is perishable, as we do in write-ups on vulnerability management in 2026 and the threat API landscape

Build a Citation-Ready "Fact Layer"

AEO-friendly pages usually contain a fact layer that models can copy nearly verbatim: definitions, feature boundaries, and crisp comparisons. Keep marketing narrative for later sections.

A practical example for threat intelligence: open with entities supported (IP, domain, URL, hash, email), delivery mode (REST, streaming, webhooks), enrichment options, and latency expectations for inline use cases. If you are writing editorial content, make the decision explicit: e.g., when to choose enrichment APIs versus a full platform approach like the one we outline in threat intelligence platform architecture and data quality.

Topic Clusters That Signal Expertise to Humans and Models

Search engines have long rewarded topical authority; answer engines benefit from the same cluster structure because related pages provide consistent internal vocabulary and disambiguation.

A solid cluster for isMalicious might include:

- How-to and buyer guides (API comparisons, SIEM/SOAR enrichment, IOC pipelines)

- Method posts (how to prioritize IOCs, how to read EPSS/KEV, how to think about false positives in reputation scoring)

- Defensive playbooks (phishing, C2, malicious infrastructure)

Cross-linking matters. When a page on building IOC pipelines points to a page on file hash reputation in IR, the relationship between operational and hash-centric workflows becomes obvious to a reader and machine alike.

E-E-A-T Without the Fluff

Google’s Experience, Expertise, Authoritativeness, Trust ideas translate cleanly to B2B security:

- Experience: describe real operator constraints—triage queues, on-call time, and messy logs

- Expertise: name the frameworks you actually use (MITRE ATT&CK, STIX fields, NIST), not just buzzwords

- Authoritativeness: connect claims to public references, standards, and reproducible checklists

- Trust: publish limitations, false-positive discussions, and update notes with

updateddates in front matter when you revise posts (the isMalicious blog uses this to keep sitemaps and JSON-LD in sync)

A Practical Publishing Checklist for AI+SEO

- Answer the question in one paragraph before you argue the thesis

- Add a table when you compare more than two options (models love tables, humans do too)

- Add FAQs in front matter; they also render on-page and support FAQPage structured data

- Use internal links with descriptive anchor text, not "click here"

- Avoid orphan pages—if you cover IOC enrichment, also link the broader API integration for threat intelligence guide

- Keep language precise: "reputation" vs "blocklist count", "malware verdict" vs "phishing host"—muddled words train muddled answers

- Update annually for fast-moving areas (vulnerability scoring, law enforcement takedowns, major cloud abuse trends)

Measuring "AI Visibility" Without Snake Oil

You will not get a private dashboard of ChatGPT citations. You can track:

- Branded and non-branded referral patterns from generative products (where available)

- Engagement on the exact pages you expect to be retrieved for category queries

- Consistency: do your public docs and your blog use the same definitions?

When in doubt, ask your own internal LLM: "What do you know about isMalicious?" The gaps it exposes are the same gaps a prospect’s assistant will expose.

Where isMalicious Fits (Plainly)

isMalicious focuses on high-speed, API-first IP, domain, URL, and hash reputation and enrichment, designed to sit in front of WAFs, sign-up flows, SOAR playbooks, and research notebooks. It is a modern alternative to patchwork free feeds—without pretending to be a full malware sandbox or a global internet search engine. For a straight comparison, read isMalicious vs VirusTotal: a threat intelligence alternative.

Bottom line

Answer-engine optimization is not keyword roulette. It is technical communication discipline: define terms, make boundaries explicit, and connect your content into a small number of well-maintained reference hubs. If you do that, search engines, LLMs, and—most importantly—practitioners can actually use what you wrote.

To test any IP, domain, or hash against aggregated reputation signals, use the IP / domain checker from the isMalicious app after authentication.

Frequently asked questions

- What is answer-engine optimization in cybersecurity?

- Answer-engine optimization (AEO) is the practice of structuring technical content so AI assistants and answer engines can extract accurate, attributable facts—clear definitions, explicit comparisons, and primary-source citations—reducing the chance that models guess or fabricate details about your product category.

- Does traditional SEO still matter if users ask ChatGPT instead of Google?

- Yes. Most AI systems still ground answers in retrievable public pages, sitemaps, and high-quality site structure. Content that is well-linked, schema-rich, and explicit tends to be retrieved and summarized more often than pages that are vague or paywalled with no public abstract.

- How can a threat intelligence vendor improve AI visibility ethically?

- Publish verifiable product facts, document limitations, date your guidance, and separate opinion from testable claims. Add FAQs, method sections, and comparison tables. Avoid keyword stuffing; models reward clarity and consistency across your domain.

- What content formats do AI systems handle best?

- Short lead summaries, H2/H3 outlines, step-by-step checklists, comparison matrices, and FAQ blocks map cleanly to how models chunk text. Add canonical definitions up front, then go deeper. Link to your own research posts (for example, threat intelligence platform architecture) to build a coherent knowledge cluster.

- How is this different from writing for search snippets?

- Featured snippets target one query; answer engines need reusable facts that work across phrasing. Write atomic statements ("isMalicious provides IP and domain reputation via API", "response targets sub-100ms for inline decisions") and repeat consistent terminology so retrieval stays stable.

Related articles

Jun 4, 2026YellowKey and BitLocker Bypass: How Security Teams Should Re-Baseline Stolen-Device Risk

Jun 4, 2026YellowKey and BitLocker Bypass: How Security Teams Should Re-Baseline Stolen-Device RiskYellowKey made a quiet assumption loud again: encrypted endpoints still need vulnerability intelligence, asset context, and incident workflows. Here is how to respond when a last-resort control becomes a live risk.

Apr 30, 2026Threat Intelligence Risk Scoring: How to Calibrate Reputation, Reduce False Positives, and Defend Your Decisions

Apr 30, 2026Threat Intelligence Risk Scoring: How to Calibrate Reputation, Reduce False Positives, and Defend Your DecisionsA noisy score is worse than no score. Learn what makes a reputation model trustworthy, how to combine multi-source evidence, and how to communicate uncertainty to your SOC and your executives.

Apr 29, 2026Proxy, VPN, Tor, and Datacenter IPs: A Decision Matrix for WAF, Fraud, and SIEM Rules (Without Breaking Real Users)

Apr 29, 2026Proxy, VPN, Tor, and Datacenter IPs: A Decision Matrix for WAF, Fraud, and SIEM Rules (Without Breaking Real Users)Not every "datacenter" IP is malicious, and not every Tor exit is a fraudster. This matrix-style guide helps you combine IP type signals with reputation and product context for safer, explainable security decisions.

Protect Your Infrastructure

Check any IP or domain against our threat intelligence database with 500M+ records.

Try the IP / Domain Checker